The Cost of Error and its role in mitigating risks

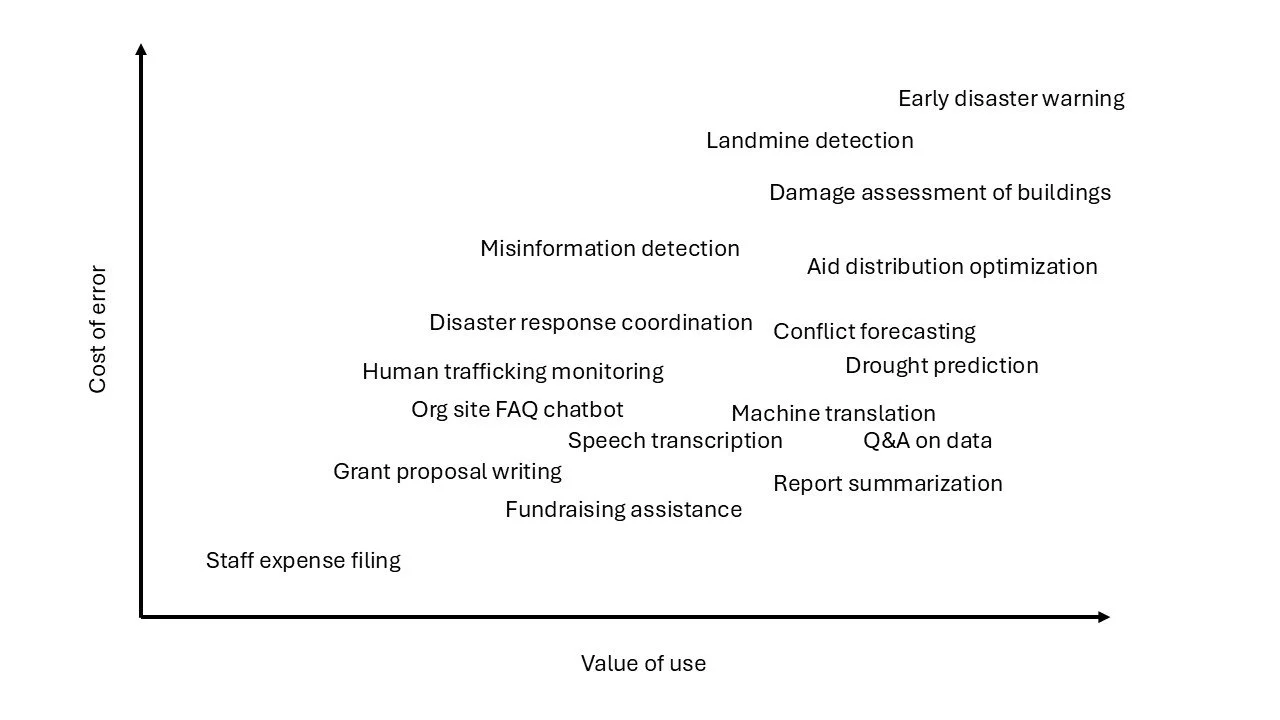

Not all errors carry the same weight. When AI is used in low‑risk contexts, such as drafting grant proposals, an inaccurate output may cause inconvenience but rarely leads to serious harm. In contrast, when AI supports high‑risk processes — early disaster warnings, landmine detection, or other life‑critical decisions — an error can produce severe, even irreversible, consequences.

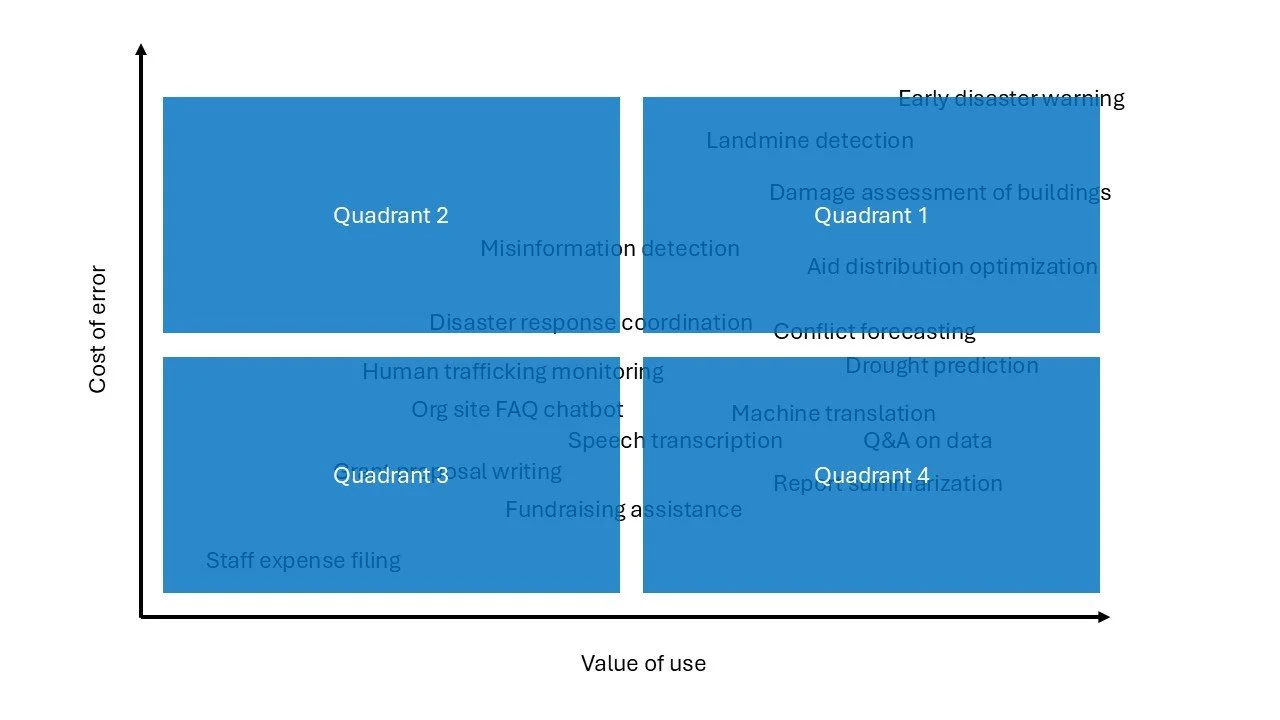

Thinking in terms of the cost of error - the human, financial, legal, ethical, or operational impact if the AI is wrong—helps you design a more resilient use case. It’s useful to imagine this cost as a continuum from low to high, then locate your specific use case along that line.

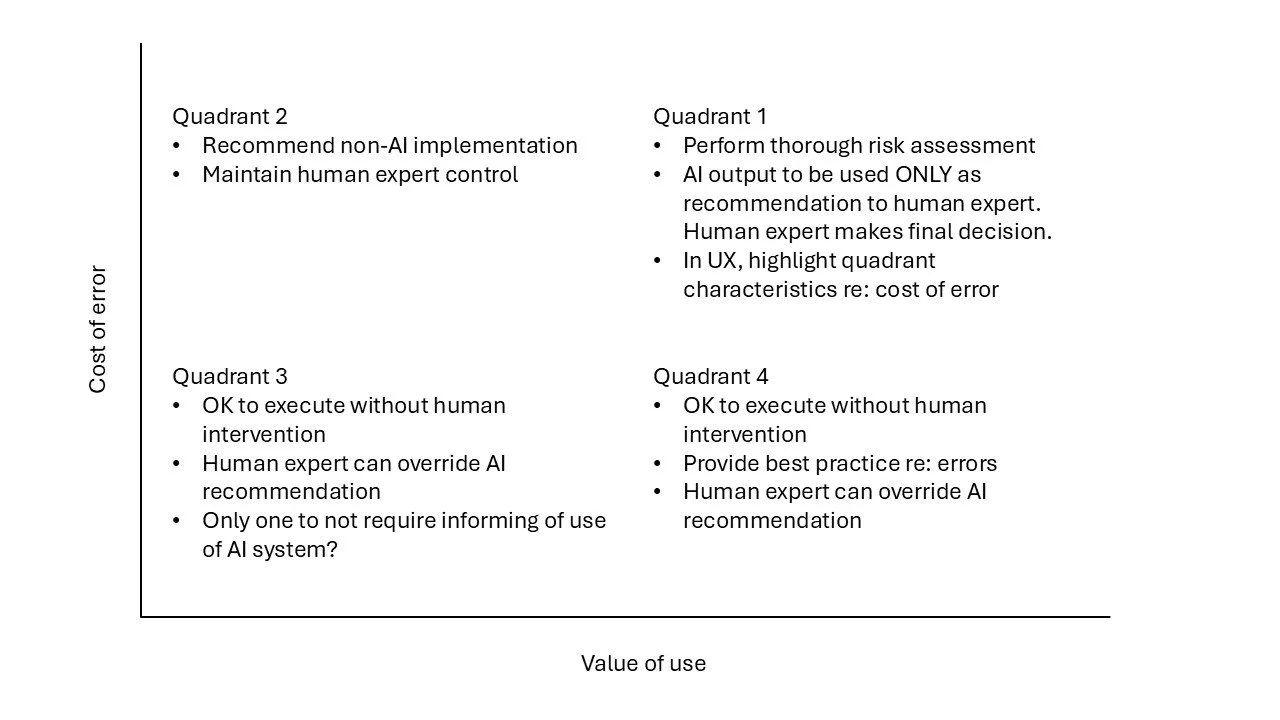

When the cost of error is low, acting directly on AI output without human review is generally acceptable.

When the cost of error is high, or anything other than clearly low, AI output should be treated strictly as a recommendation that requires human expertise before action.

In humanitarian settings, the cost of error must always be assessed through the lens of protection principles and the imperative to do no harm, ensuring that AI augments decision‑making without introducing unacceptable risk.